Technical SEO for 2026: How to Build a Website Google and AI Search Can Crawl, Index and Surface

Technical SEO is the foundation that determines whether your website gets found or buried, no matter how good your content is. Search engines like Google, and the AI platforms that are now reshaping how people discover information can only surface your pages if they can crawl, render and index them without friction. If your site has technical barriers, you are invisible to both humans and machines. This guide covers every major component of technical SEO, explains how it connects to AI search visibility in 2026 and shows you what a proper technical audit looks like in practice.

Technical SEO Guide

Technical SEO is the process of improving your website’s infrastructure so search engines can crawl, index and render your pages properly, which helps your content compete in both Google Search and AI search experiences. It covers essentials such as site speed, mobile usability, internal architecture, structured data, canonicals and crawl controls, all of which affect whether search engines can access and understand your content. That matters commercially: Google now uses the mobile version of content for indexing and ranking, and Google has also reported that 53% of mobile visits are abandoned if a page takes longer than three seconds to load.

Turn Technical SEO Into Revenue Growth

Strong technical foundations are only half the equation. Our SaaS SEO case study shows how a B2B legal tech company went from technical issues limiting growth to generating $1.31M in attributed revenue with a 1,909% ROI in 12 months - combining technical fixes with an AI SEO strategy. If you want to see how technical SEO fits into a complete AI SEO campaign, book a 45-minute Discovery Session with me.

Speak With The FounderWhy Technical SEO Matters More Than Ever

Technical SEO has always mattered, but its importance has grown sharply in 2026 for two interconnected reasons:

- Google is placing more weight on user experience signals, including page speed, interactivity and mobile usability.

- AI search interfaces are changing how content gets discovered, with some third-party platforms introducing crawler-specific considerations, while Google’s AI features still rely on standard Search eligibility requirements.

Google's User Experience Signals Have Become Ranking Factors

Google's Core Web Vitals are now embedded into its ranking algorithm, meaning page speed, visual stability and interactivity all directly affect where your pages appear in search results. Core Web Vitals are used by Google’s ranking systems as part of page experience. Strong scores support a better user experience and can help search performance, but Google says good Core Web Vitals scores alone do not guarantee top rankings.

The shift to mobile-first indexing has raised the stakes even further. Google now uses the mobile version of your content for indexing and ranking, so your site’s mobile experience directly affects how well it can perform in search. If key content, usability or performance falls short on mobile, strong desktop design alone will not make up for it.

Load speed is particularly unforgiving. 53% of mobile users abandon a website if it takes more than three seconds to load, and a one-second delay in mobile load time can result in a 20% drop in conversion rates. These are not soft metrics - they represent real revenue at risk from technical neglect.

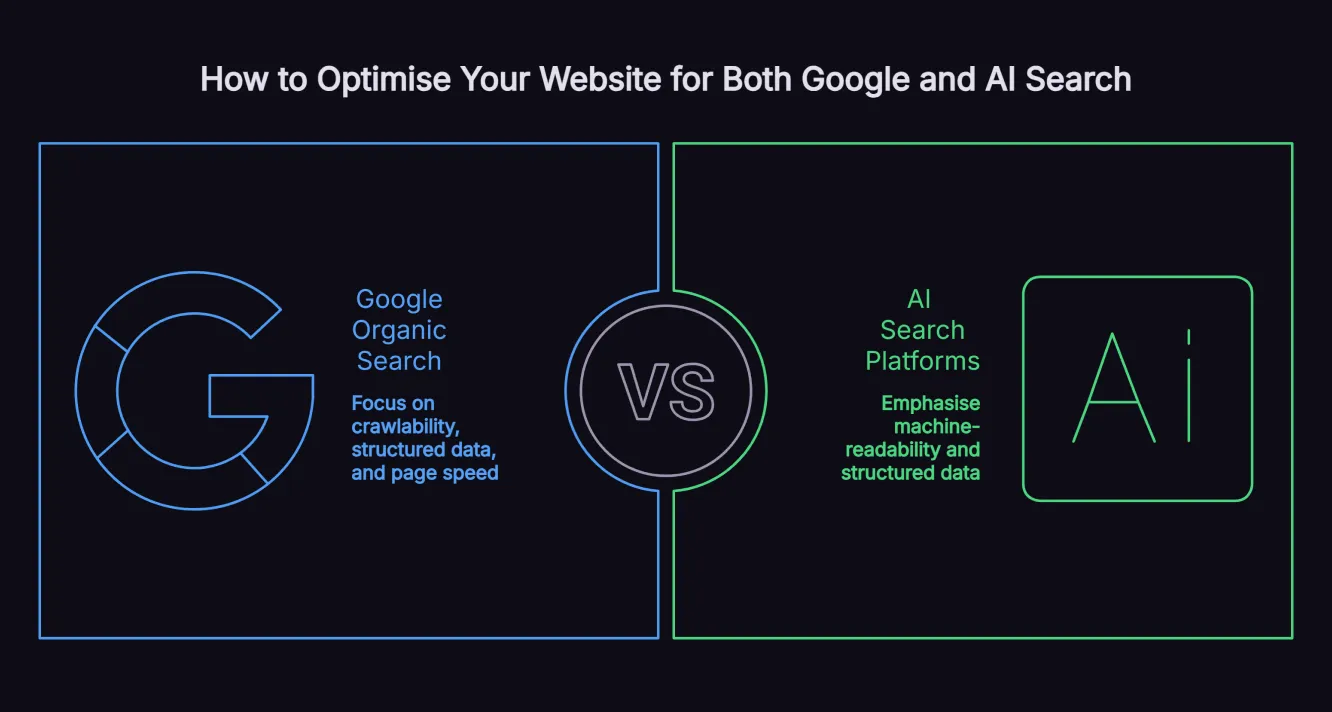

AI Search Platforms Require Different Technical Conditions

The rise of platforms like ChatGPT, Perplexity, Google AI Overviews and Microsoft Copilot has added a new technical dimension to SEO. AI visibility extends beyond Google Search, but the technical rules vary by platform. For Google AI Overviews and AI Mode, Google says there are no additional technical requirements beyond normal Search eligibility and snippet eligibility. For third-party platforms like ChatGPT and Perplexity, crawler access and HTML accessibility can affect discovery and citation.

Technical SEO for AI search is about making your site easy for machines to access, interpret and retrieve at scale. That includes:

- Clean, crawlable URLs

- Accurate XML sitemaps

- Accessible HTML content

- Structured data that clarifies page meaning

- Allowing relevant crawlers where appropriate, such as OpenAI’s OAI-SearchBot and PerplexityBot

If your content is blocked, buried behind weak technical implementation or difficult to parse, it is less likely to be surfaced or cited in AI-driven experiences.

This is also why heavily client-side-rendered websites can create problems. Google can render JavaScript, but it still recommends making important content available in ways that are easy to crawl and process. For AI platforms and non-Google retrieval systems, rendering behaviour is less consistent, so critical content, metadata and page structure should not depend on JavaScript alone.

In practice, technical SEO in 2026 means building a site that is fast, accessible, structured and machine-readable across both traditional search engines and emerging AI platforms. For a deeper look at this shift, our guide to AI SEO strategy explains how to optimise for Google and AI search visibility together.

Core Components of Technical SEO

Technical SEO covers a wide range of elements. Here is what each core component does and why it matters.

Site Architecture and URL Structure

Your site's architecture is the starting point for technical SEO. A logical, hierarchical structure helps search engine bots crawl efficiently, distributes link authority to important pages and makes navigation intuitive for users.

A good site structure should:

- Use clean, descriptive URLs with hyphens separating words

- Avoid deeply nested pages, with important pages ideally reachable within three clicks of the homepage

- Use breadcrumb navigation to reinforce hierarchy for both users and search engines

Internal linking works hand-in-hand with site architecture. To strengthen it:

- Use descriptive anchor text instead of generic phrases like “click here”

- Link strategically to key service pages and pillar content to pass authority and improve ranking potential

Our keyword mapping guide explains how to align site architecture with your target keywords to maximise ranking potential across your content cluster.

Crawlability and Robots.txt

Before a search engine can index your pages, it must first crawl them. Crawlability issues prevent bots from accessing content that you want indexed, while inadvertently open pages may let bots waste crawl budget on content that adds no value.

The robots.txt file controls which parts of your site search engine bots can and cannot access. Common mistakes include:

- Blocking JavaScript or CSS files that search engines need to render pages correctly

- Blocking entire subfolders that contain valuable content

For AI search, the same principle applies with an additional layer. For non-Google AI platforms, review crawler access deliberately. OpenAI recommends allowing OAI-SearchBot for ChatGPT search visibility, and Perplexity recommends allowing PerplexityBot if you want visibility there. Google AI Overviews and AI Mode do not require a separate AI crawler allowance; pages need to be indexed and eligible to appear with a snippet in Google Search.

XML Sitemaps and Indexing

An XML sitemap acts as a directory of all the pages you want search engines to find and index. Submitting an updated sitemap through Google Search Console signals to Google which pages are most important and keeps the index aligned with any structural changes you make to your site.

Effective sitemaps should:

- Include only canonical URLs

- Exclude noindex pages

- Use accurate lastmod

- Timestamps when content has materially changed

Indexing issues are separate from crawling issues. A page can be crawled but not indexed if it is set to noindex, returns an error, or is seen as duplicate content. Google Search Console’s Page indexing report is the fastest way to identify pages that are crawled but not indexed and why.

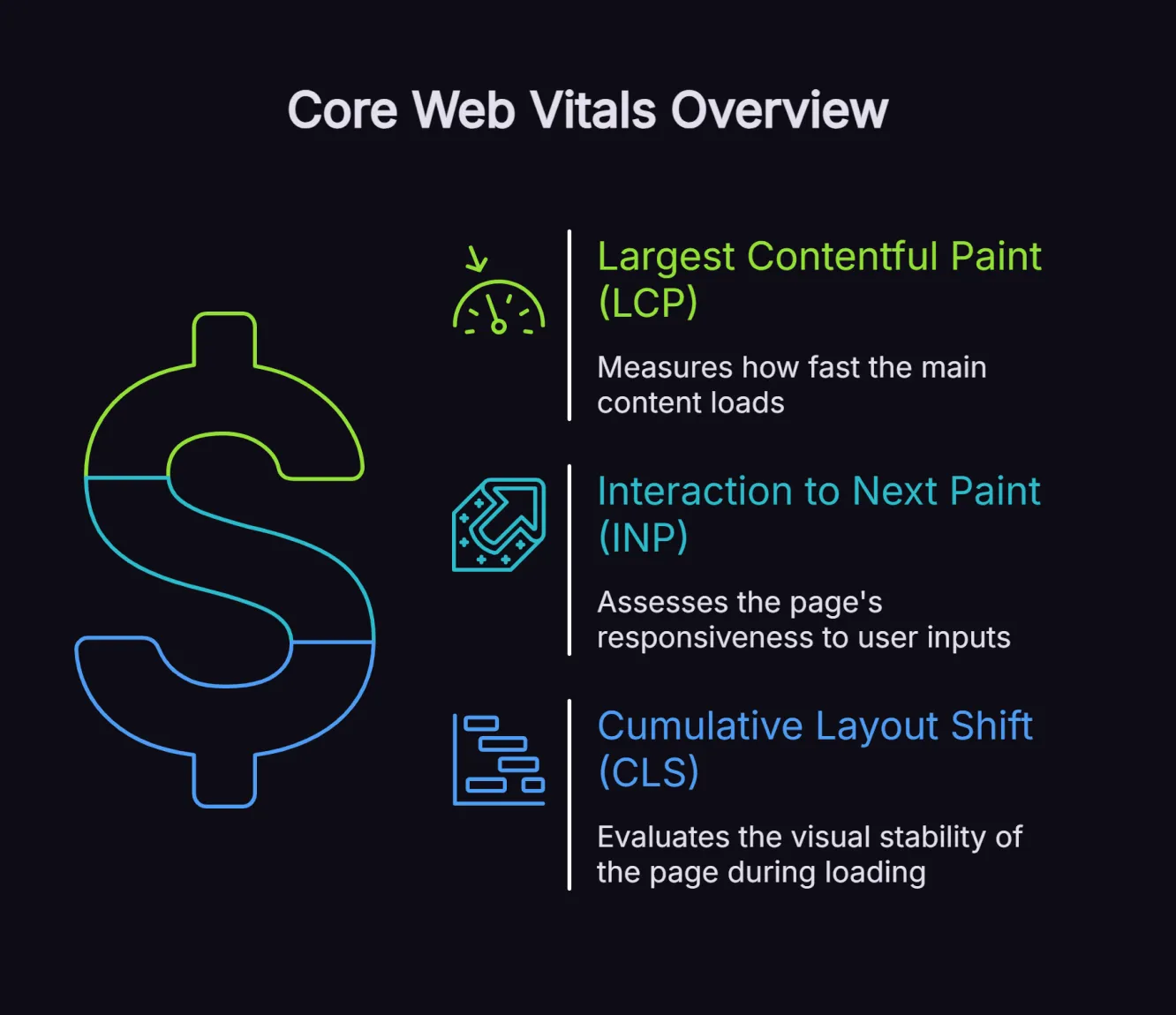

Core Web Vitals and Page Speed

Google's Core Web Vitals measure three dimensions of user experience:

- Largest Contentful Paint (LCP): How fast the main content loads. Google's threshold is under 2.5 seconds.

- Interaction to Next Paint (INP): How responsive the page is to user inputs. Target under 200ms.

- Cumulative Layout Shift (CLS): How visually stable the page is as it loads. Target under 0.1.

Pages that meet Google’s recommended Core Web Vitals thresholds are better positioned to deliver a strong user experience, which can support search performance. However, Core Web Vitals are only one part of Google’s broader ranking systems, so they should be treated as an important technical foundation rather than a guaranteed ranking advantage on their own.

Common improvements that lift Core Web Vitals scores include:

- Compressing and converting images to WebP format

- Enabling browser caching

- Minifying CSS, JavaScript and HTML

- Using a Content Delivery Network (CDN)

- Deferring non-critical JavaScript

Google PageSpeed Insights is the standard tool for diagnosing Core Web Vitals issues, as it provides both lab data and real-user field data.

Mobile-First Optimisation

Since Google adopted mobile-first indexing, the mobile version of your website is what determines how your site is evaluated and ranked. Because Google now uses mobile-first indexing across the web, your mobile pages need to expose the same core content, metadata and structured data as desktop. Prioritise the following for optimal mobile performance:

- Responsive layouts

- Tap target optimisation

- Intrusive interstitial removal

- Real-device testing

Mobile optimisation goes beyond responsive design. It includes:

- Ensuring tap targets (buttons and links) are large enough to interact with on a touchscreen

- Removing intrusive interstitials that block content

- Testing navigation for mobile usability, not just desktop

- Running real-device testing, not just emulator checks

For mobile diagnosis, use Lighthouse, real-device testing and Search Console’s remaining performance reports, especially Core Web Vitals and HTTPS-related signals.

HTTPS and Site Security

HTTPS is a confirmed ranking signal. Sites still running on HTTP are marked as insecure by browsers, which increases bounce rates and signals poor trustworthiness to search engines. Migrating to HTTPS requires obtaining an SSL/TLS certificate and updating all internal links from http to https.

Beyond ranking, HTTPS matters for AI search platforms. HTTPS is a confirmed Google ranking signal and a basic trust requirement for users. Treat it as essential site hygiene, but avoid claiming it directly increases AI citation likelihood unless you have platform-specific evidence.

Security also extends to protecting crawl budget - blocking login pages, admin panels, cart URLs, and other state-changing URLs with robots.txt keeps crawl resources focused on pages that should rank.

Structured Data and Schema Markup

Structured data is markup added to your website's code that helps search engines - and AI platforms - understand what your content means, not just what it says. It is implemented using JSON-LD format and Schema.org vocabulary.

Structured data helps search engines understand your content more clearly and can make eligible pages appear with enhanced search features, such as review snippets, product details and other rich results. Despite its value, many websites still miss opportunities to use structured data to improve how their content is interpreted and displayed in search.

For AI search, structured data can help make your content easier for machines to interpret and classify. It provides additional context about the meaning of a page and can support clearer extraction of key information, but it does not guarantee AI citations or rich visibility on its own.

The most impactful schema types for most sites include:

- FAQPage: Helps structure Q&A content, but does not directly trigger Google AI Overviews.

- HowTo: Eligible for step-by-step rich results

- Organisation: Establishes entity identity for AI knowledge graphs

- BreadcrumbList: Improves site structure signals

- Article: Adds author and date signals for content authority

Our pillar content strategy guide covers how to build the content structure that structured data is designed to enhance.

Canonicalisation and Duplicate Content

Duplicate content confuses search engines. When multiple URLs contain identical or near-identical content, search engines are forced to choose which version to rank - and they often make the wrong choice. Canonical tags tell search engines which version of a page is the definitive one, consolidating ranking signals to a single URL.

Common duplicate content issues include:

- HTTP vs HTTPS versions of the same page are both accessible

- www vs non-www versions, both being indexed

- Trailing slash vs no trailing slash variants

- Pagination pages without proper canonical handling

- Print-friendly versions of pages accessible to bots

Canonical tags are not the only solution. 301 redirects should be used when one URL permanently replaces another. Noindex tags can remove pages from the index that serve a user purpose but should not rank (such as search results pages or filtered category views).

JavaScript Rendering

JavaScript is a growing challenge for technical SEO. Many modern websites use frameworks like React, Angular or Vue to deliver content dynamically, but not every crawler handles client-side rendering in the same way. If your key content, navigation or structured data relies heavily on JavaScript, some platforms may not process it fully.

For traditional Google search, Googlebot does render JavaScript, but with delays - meaning Google may index an older or incomplete version of your page. Server-side rendering or static site generation are the cleanest solutions. Where these are not feasible, the key principle is progressive enhancement: deliver core content and structured data in the base HTML, then layer on JavaScript functionality.

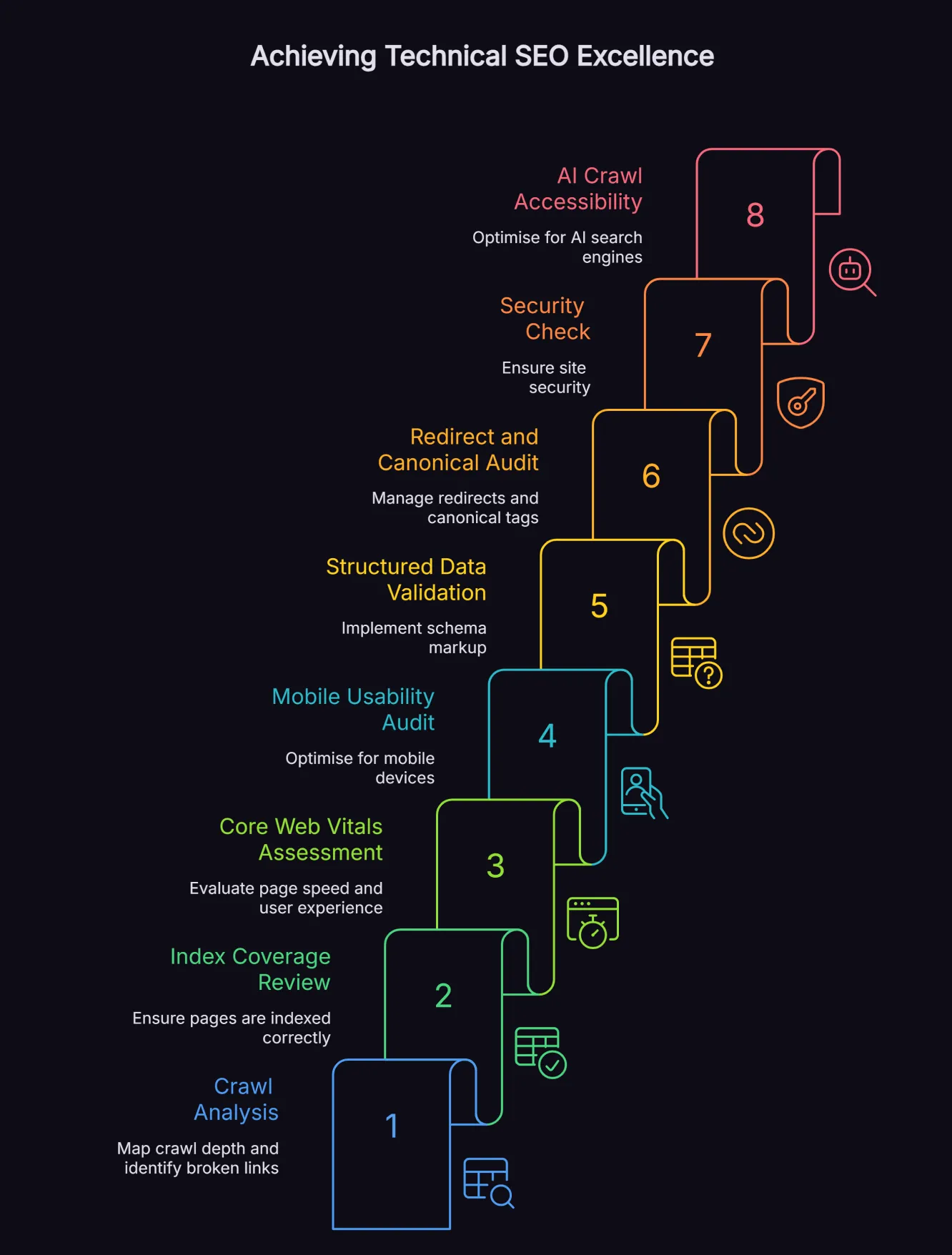

What a Technical SEO Audit Covers

A technical SEO audit is a systematic analysis of your site's infrastructure to identify issues limiting crawlability, indexation, performance, and visibility.

For established businesses, audits should be conducted:

- At least once per year

- After major site migrations

- Whenever organic traffic drops unexpectedly

A thorough technical SEO audit covers:

- Crawl Analysis: Using tools like Screaming Frog or Ahrefs Site Audit to map crawl depth, identify orphan pages, broken internal links, redirect chains and blocks in robots.txt

- Index Coverage Review: Using Google Search Console to identify pages excluded from the index, soft 404 errors, crawl anomalies and indexing status across your site

- Core Web Vitals Assessment: Using PageSpeed Insights and Google Search Console’s Core Web Vitals reporting to identify performance gaps by device and page type

- Mobile UX Audit: Reviewing key templates and pages for mobile layout, usability and performance issues using Lighthouse, PageSpeed Insights and real-device testing

- Structured Data Validation: Using Google's Rich Results Test to verify schema markup is correctly implemented and error-free

- Redirect and Canonical Audit: Mapping redirect chains, loop redirects and canonical inconsistencies

- Security Check: Confirming HTTPS is correctly implemented across all pages and resources

- AI Crawl Accessibility: Verifying that relevant AI crawlers, such as OpenAI’s OAI-SearchBot, PerplexityBot and Anthropic’s documented search-related bots, are allowed in robots.txt and that core content is present in base HTML

For businesses looking to integrate technical SEO with a full AI search strategy, our AI SEO strategy service includes a complete technical audit as part of the strategy development process, with findings mapped directly to revenue growth opportunities.

Technical SEO and AI Search: The Critical Connection

The emergence of AI Overviews, ChatGPT, Perplexity and Microsoft Copilot has created a dual-track SEO reality. You now need to be technically optimised for both traditional Google indexing and AI retrieval systems.

Traffic from generative AI platforms is growing quickly. Adobe reported that AI-driven referral traffic to U.S. websites increased more than tenfold between July 2024 and February 2025, while Forrester has reported that 89% of B2B buyers now use generative AI in at least one phase of the buying process.

The technical requirements that help AI platforms cite your content are closely aligned with good traditional SEO practice, but with some important additions:

- Server-side rendering for any content you want AI platforms to access

- Clean, accessible sitemaps with timestamps to signal recency

- Comprehensive structured data using FAQPage and HowTo schema

- Core content delivered without JavaScript dependency

- AI crawlers explicitly allowed in robots.txt by name

Our guide to how AI search works explains the technical pipeline that AI platforms use to retrieve and cite web content, and how your site needs to be configured to appear in those answers.

Technical SEO and AI SEO are not separate disciplines; they share the same foundation. A site that is fast, crawlable, structured and secure performs better in both Google search and AI-generated answers. Our B2B case study demonstrates this directly: technical improvements combined with AI-optimised content drove $5.9M in attributed revenue and a 6,864% ROI over 17 months for a property management client.

Frequently Asked Questions

What is the difference between technical SEO and on-page SEO?

Technical SEO focuses on your site's infrastructure, crawlability, page speed, site architecture, structured data and security. On-page SEO focuses on the content within individual pages - keyword usage, headings, meta titles and body copy. Both are needed for rankings. Technical SEO ensures search engines can access and process your pages; on-page SEO ensures those pages are relevant and authoritative for target queries.

How often should a technical SEO audit be conducted?

For most established business websites, run a full technical audit at least annually and monitor key technical signals monthly. Larger, faster-moving or more complex sites may justify quarterly audits. You should also audit after any significant site migration, CMS change, or major redesign, and whenever you notice an unexplained drop in organic traffic or indexing coverage. Monthly monitoring via Google Search Console should be standard practice to catch issues between full audits.

Does technical SEO affect visibility in ChatGPT and other AI platforms?

Yes. Technical SEO affects visibility in ChatGPT and other AI platforms, but the requirements differ by platform. For ChatGPT search, make sure important pages are crawlable in HTML and not blocking the OAI-SearchBot. For Perplexity, allow PerplexityBot if you want visibility there. For Google AI Overviews, the key requirement is that the page is indexed and eligible to appear with a snippet in Google Search.

How long does it take to see results from technical SEO fixes?

Simple fixes like resolving crawl errors or submitting an updated sitemap can produce results within days to weeks as search engines re-crawl your site. Larger improvements like Core Web Vitals optimisation or a structured data overhaul typically show measurable results within one to three months. Site migrations with technical changes can take three to six months to fully stabilise in search rankings.

What tools are used for technical SEO?

The essential technical SEO toolkit includes Google Search Console, Google PageSpeed Insights, Screaming Frog or Ahrefs Site Audit, Google’s Rich Results Test and Lighthouse. For AI visibility, use the Search Console Performance report to track Google AI Overviews and AI Mode within overall search traffic, alongside analytics and Ahrefs Brand Radar for AI citation tracking. Google rolls AI Overviews and AI Mode into broader Search Console reporting rather than a dedicated AI Overview report.

What is crawl budget, and why does it matter?

Crawl budget refers to the number of pages Google is willing to crawl on your site within a given timeframe. For large sites with thousands of pages, crawl budget becomes a limiting factor. If Google spends its crawl budget on low-value pages like thin content, filtered URLs or duplicate pages, important pages may not be crawled and indexed as frequently. Managing crawl budget involves removing or blocking low-value URLs, fixing redirect chains and strengthening internal links to priority pages.

The Foundation Everything Else Depends On

Technical SEO is the foundation that makes every other SEO investment work harder, from content and links to AI visibility. As search expands across Google, ChatGPT and AI Overviews, the sites that win are the ones search engines can crawl, index and understand without friction. Get the fundamentals right, and you give your website a stronger chance to perform across every search surface that matters. Explore our technical SEO consulting if you want every fix tied to measurable growth.

Want Insights Like This Fortnightly?

Our AI SEO strategies and tactics delivered fortnightly, including bonus trade secrets not shared anywhere else. No fluff. Just what's working right now. 5-minute read.