SEO Technical Audit: How to Find and Fix What’s Holding Your Site Back

A strong content strategy and a healthy backlink profile are not always enough to move the rankings needle. When a website plateaus despite solid content investment, the problem is often sitting in the technical foundation - invisible to the naked eye but visible to every search engine crawler that visits. An SEO technical audit is the diagnostic process that surfaces those hidden issues and turns them into a prioritised fix list. This guide explains exactly what a technical audit covers, how to run one and why the process looks different than it did even two years ago.

Quick Guide: What Is an SEO Technical Audit?

An SEO technical audit is a review of the technical factors that affect how well search engines can crawl, index and understand your site, including crawlability, indexation, page speed, Core Web Vitals, internal linking, site architecture, schema markup and mobile usability. It matters because technical issues can suppress visibility even when content is strong: Google uses mobile-first indexing, and Google data shows that as page load time increases from one second to 10 seconds, the probability of a mobile visitor bouncing rises by 123%.

Turn Technical Issues into Revenue with Expert Help

Understanding the issues is the first step. Resolving them in a way that ties directly to revenue is where the real work happens. Our technical SEO consulting service goes beyond a raw data export - you receive a prioritised fix list organised by revenue impact, full schema markup implementation and ongoing technical monitoring built for both Google and AI search. Our broader AI-first SEO approach helped a B2B client generate $5.9M in revenue with a 6,864% ROI over 17 months. Technical improvements were one part of that wider campaign from day one.

Explore Technical SEO ConsultingWhat Does an SEO Technical Audit Cover?

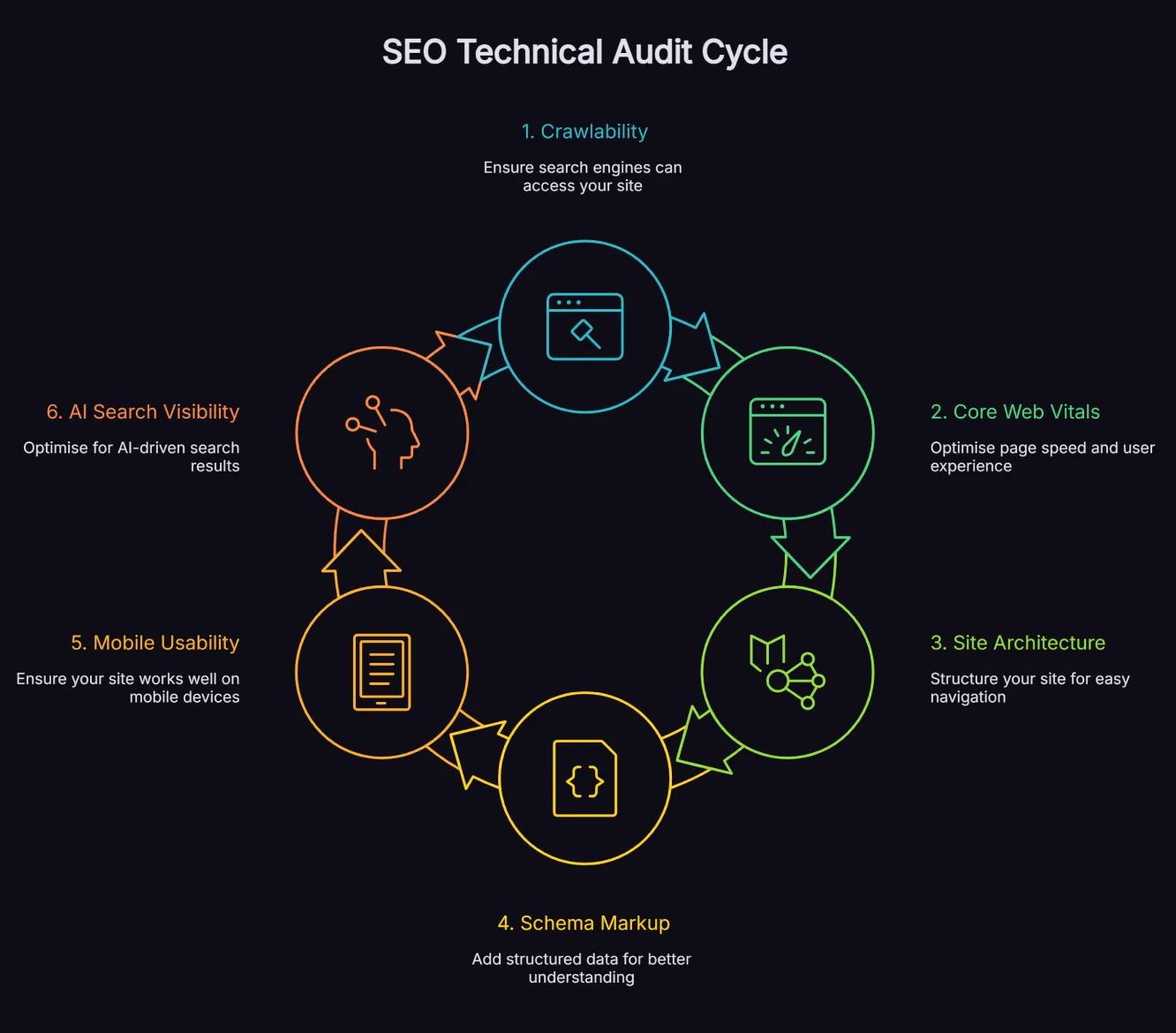

A proper audit covers six core areas. Each one is capable of limiting your search visibility independently, but they often interact - a slow site with poor schema and a messy crawl structure compounds the damage across all three areas simultaneously.

1. Crawlability and Indexation

Search engines discover your pages by following links from one URL to the next. Your content may never be discovered if there are broken crawl paths caused by any of the following:

- Redirect chains

- Orphan pages

- Blocked resources

- Incorrect robots.txt directives

The crawlability audit maps how efficiently Googlebot moves through your site and identifies any barriers stopping it from reaching important pages.

Indexation analysis goes one step further. Just because a page is crawled does not mean it is indexed. The audit reviews which pages are included in Google's index, which are excluded and why. Common issues include:

- Duplicate content creating indexation confusion

- Noindex tags applied to pages that should rank

- XML sitemap problems that prevent efficient discovery

2. Core Web Vitals and Page Speed

Core Web Vitals are Google's standardised measurement of user experience:

- Largest Contentful Paint (LCP) measures loading performance

- Interaction to Next Paint (INP) measures responsiveness

- Cumulative Layout Shift (CLS) measures visual stability

Poor scores on any of these metrics can affect your ranking competitiveness, particularly in mobile search.

Google reported that as page load time increases from one second to 10 seconds, the probability of a mobile visitor bouncing increases by 123%. Speed problems are not just a ranking issue - they are a conversion issue. A technical audit assesses Core Web Vitals against Google's thresholds for every key page on your site, not just your homepage, and identifies which specific elements are responsible for slow scores.

3. Site Architecture and Internal Linking

How your pages are organised and connected affects both crawl efficiency and the distribution of ranking signals across your site. Pages buried too deep in the site structure receive less crawl attention and fewer internal links, which weakens their ranking potential regardless of content quality.

The architecture audit reviews:

- Crawl depth

- URL structure

- Breadcrumb navigation

- Internal linking patterns

It identifies orphan pages with no internal links pointing to them and highlights opportunities to restructure navigation so that authority flows more efficiently to the pages that matter most for revenue.

4. Schema Markup and Structured Data

Schema markup is the structured data layer that tells search engines - and AI systems - exactly what your content means. Without it, Google and AI platforms have to infer context from unstructured text. With it, you give them a clear, machine-readable signal about your:

- Business

- Services

- Content type

- Relationships

The schema audit reviews every key page type:

- Service pages

- Blog posts

- Case studies

- The homepage and any specialised content

It checks:

- Whether schema is present

- Whether it is implemented correctly in JSON-LD format

- Whether it covers the types most likely to improve rich result eligibility and make the content easier for search engines to interpret

Structured data helps search engines understand your content and can make pages eligible for rich results, which may improve visibility and click-through rate, but there is no guaranteed uplift. For businesses focused on stronger search visibility and machine-readable content, schema is a valuable part of a solid technical SEO foundation. As noted in our AI SEO guide, clear structure and consistent markup can make content easier for search engines and AI systems to interpret, even though platforms do not publish a simple schema-to-citation rule.

5. Mobile Usability

Google uses mobile-first indexing, meaning the mobile version of your site is what it crawls and indexes - not the desktop version. If your mobile experience is compromised, your rankings can suffer even if your desktop site performs well.

The mobile audit checks:

- Responsive design implementation

- Viewport configuration

- Touch target sizing

- Whether critical content or navigation elements are hidden from mobile crawlers through JavaScript rendering

This last point is increasingly important: if your navigation only appears after a user interaction, Googlebot may never see your site structure at all.

6. AI Search Visibility

This is the area that most traditional technical audits ignore entirely. AI search experiences still depend on the fundamentals that support discoverability in search:

- Crawlable pages

- Accessible content

- Clear structure

- Helpful, reliable information

For Google AI features, Google says there are no extra eligibility requirements beyond standard SEO best practices. Strong fundamentals still matter; if pages are to be surfaced in AI Overviews or AI Mode, they need to be:

- Crawlable

- Indexable

- Clearly structured

- Genuinely useful

The AI visibility component of a technical audit reviews whether your site is technically accessible and easy for AI-driven search systems to interpret. That includes checking:

- Schema coverage

- Rendering quality

- Content accessibility

- Whether your structured data matches the content users can actually see

Inconsistencies between markup and visible page content can affect rich-result eligibility and reduce confidence in your structured data. Keep the schema aligned with the page itself. You can learn more about how technical foundations support AI search visibility in our semantic SEO guide.

How to Conduct an SEO Technical Audit: Step by Step

Running a proper SEO technical audit requires access to the right data sources before you touch any crawler. Starting with a raw crawl and no baseline context leads to misinterpretation - issues that look significant may be irrelevant, and issues that look minor may be your biggest ranking blockers.

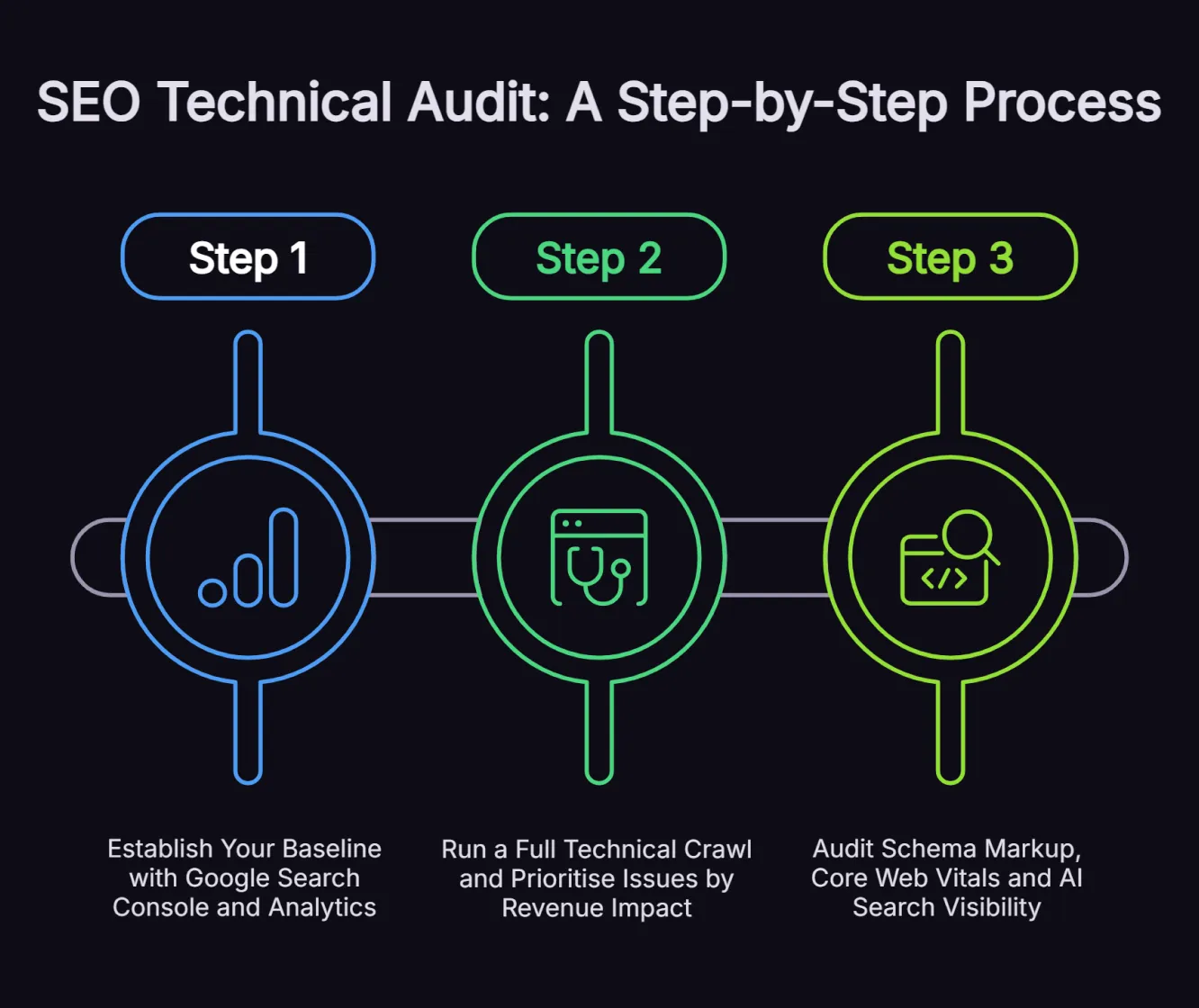

Step 1: Establish Your Baseline with Google Search Console and Analytics

Your first job is to understand how search engines currently experience your site. Open Google Search Console and review the Page indexing report. This shows you which pages are indexed, which are excluded and the reasons for each exclusion. Cross-reference this against your crawl data to identify patterns - if entire sections of your site are excluded, that is a structural signal, not a random error.

Pull your organic traffic data from GA4 at the same time. Segment by landing page so you can see which pages are driving value and cross-reference that with their technical health later in the process. This baseline step also establishes which pages should be prioritised in the audit - the ones closest to revenue deserve more scrutiny than low-traffic informational pages.

Step 2: Run a Full Technical Crawl and Prioritise Issues by Revenue Impact

Use an enterprise-grade crawler - Screaming Frog, Sitebulb or the Ahrefs Site Audit tool - to crawl your entire site. The crawl will return a list of technical issues across:

- Response codes

- Redirects

- Canonical tags

- Meta tags

- Page speed signals

- Internal linking structure

The critical step most audits skip is prioritisation. Not every issue on that list matters equally. A 404 error on an obsolete blog post from 2019 is not the same as a 404 on a key service page. Redirect chains on high-authority URLs cost you more link equity than chains on low-traffic pages. Revenue-based prioritisation - ranking fixes by their likely business impact rather than just technical severity - is what separates a useful audit from a report that gathers dust.

Step 3: Audit Schema Markup, Core Web Vitals and AI Search Visibility

With crawl data in hand, move to the three areas that have grown most in importance since 2024. For Core Web Vitals, use Google's Chrome UX Report (CrUX) to access real-world performance data rather than lab-based estimates. CrUX reflects how real-world Chrome users experience your pages and can be viewed by device type. Google uses CrUX data to inform the page experience ranking factor.

For schema, run your key pages through Google's Rich Results Test and cross-check the structured data against your page content. Inconsistencies - where your schema describes something different to what is visible on the page - can undermine both rich result eligibility and AI citation rates. Review your top pages for schema type coverage and identify gaps where additional markup would support both traditional SEO and AI visibility. For a deep dive on topical authority and how content structure connects to technical signals, our guide covers the relationship in detail.

For AI visibility, spot-check key queries in ChatGPT, Perplexity and Google AI Overviews to see which sources are being cited. If competitors appear in AI responses for your target terms and you do not, review both technical and content factors. Start by reviewing the following before drawing conclusions about the cause:

- Crawlability

- Rendering

- Internal linking

- Source accessibility

- Content quality

How Often Should You Run an SEO Technical Audit?

The short answer is: more often than most businesses currently do. A full technical audit should be completed at minimum once per year, with a lighter monthly health check to catch new issues before they compound.

Several specific triggers should also prompt an immediate audit. A significant website redesign or platform migration can introduce dozens of technical issues overnight. A major Google update can coincide with visibility changes worth investigating in Search Console. Sudden unexplained drops in organic traffic are often traceable to a technical change, something that was quietly introduced and not noticed until rankings moved.

Technical SEO is not a one-off exercise. Sites evolve:

- New pages are published

- Old ones are removed

- CMS updates change rendering behaviour

- Tracking changes affects crawl patterns

Monthly monitoring between full audits ensures that new issues are caught early, before they have had time to erode the performance gains your content and link building work have delivered.

SEO Technical Audit Tools

A credible technical audit requires a combination of data sources rather than any single tool. Each tool sees a different slice of the picture.

Google Search Console

The closest you get to Google's own view of your site. It shows:

- Indexation status

- Manual actions

- Core Web Vitals data from real users

- Crawl errors

- Schema validation warnings

It is free and should be the first tool anyone opens in any audit process.

Screaming Frog SEO Spider

The industry standard for comprehensive site crawls. It maps your full URL structure, identifies:

- Broken links

- Redirect chains

- Duplicate content

- Missing meta tags

- Internal linking gaps

Its embedding-based analysis can also surface semantically similar and low-relevance content, helping you identify pages that may be weakening topical clarity.

Ahrefs Site Audit

Combines crawl data with backlink analysis, making it useful for identifying technical issues that interact with link equity. Its schema detection is also strong, returning a full inventory of structured data types across the site.

CrUX Vis

CrUX Vis is a Google dashboard for visualising weekly historical CrUX data over time. It is useful for trend analysis, while tools such as PageSpeed Insights and the CrUX API are often better for checking the latest field data for a specific URL or origin.

Google Rich Results Test

Validates your structured data against Google's schema eligibility requirements. It identifies errors and warnings in JSON-LD implementation and shows which rich result types a page is eligible for.

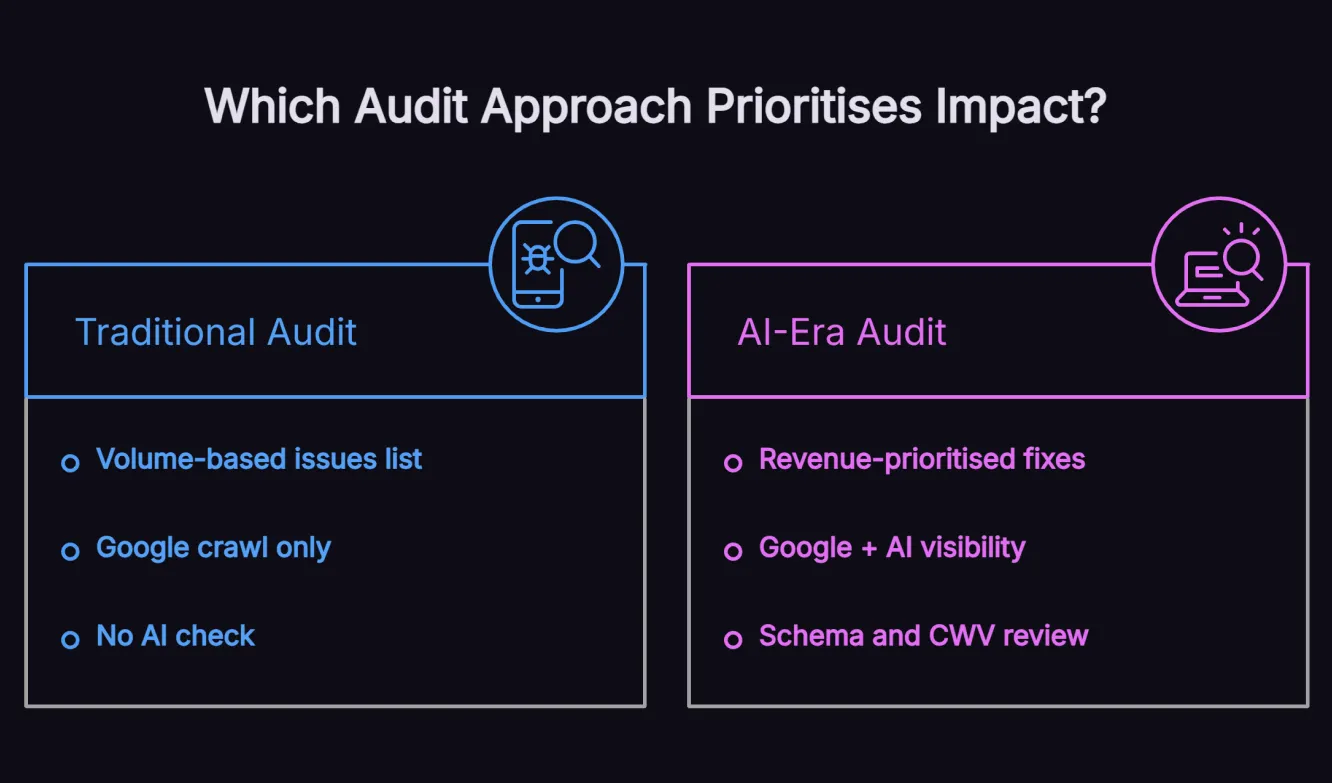

Why Most SEO Technical Audits Fall Short

A technical audit is only useful if it leads to the right fixes in the right order. Many audits fail not because they lack data, but because they lack prioritisation, strategic context and a modern view of how search visibility now works.

1. Volume Over Value

The most common failure in technical audits is confusing volume with value. A crawler report returns hundreds of issues. If your response is to share that raw list with your development team and ask them to work through it from top to bottom, you are almost guaranteed to spend significant development time on changes that have minimal search impact.

2. Technical SEO in Isolation

The second failure is treating technical SEO as isolated from content and AI strategy. Technical health determines how much value your content delivers, not just whether Google can find your pages. A site with brilliant content but poor Core Web Vitals, missing schema and a confusing internal link structure is consistently outperformed by technically sound competitors with average content. The most effective campaigns - like the eCommerce client whose technical health score improved from 82 to 99 - integrate technical work with content strategy from the start.

3. Ignoring AI Search

The third failure is ignoring AI search in the audit process. Technical SEO that only addresses Google is half an audit. ChatGPT, Perplexity, and Google AI features can only surface content they can access, crawl, and interpret reliably. Your audit should review the following technical factors alongside traditional SEO checks:

- Crawlability

- Rendering

- Structured data consistency

- Content accessibility

If your technical audit does not include an AI visibility check, you are missing one of the fastest-growing search channels available to your business. Our AI SEO strategy begins with a full technical foundation review precisely because technical health is the prerequisite for everything that follows.

Fix the Foundation Before It Costs You More

Every month that technical issues remain unresolved, they compound. Competitors improve their technical health while yours stays static. Crawl budget gets consumed by low-value URLs while important pages receive less attention. Schema gaps can reduce machine-readable clarity and rich-result eligibility, which may limit how confidently platforms interpret your content. Technical SEO debt is real, and it has a cost that most businesses do not measure because it shows up as absence rather than error.

If your rankings have plateaued despite strong content and link building, a professional SEO technical audit is likely the most efficient next step. Issues that have accumulated over months or years can often be identified and prioritised in a single comprehensive review, giving your development team a clear fix list with revenue-based justification for each change.

Frequently Asked Questions

How long does an SEO technical audit take?

The time required for an SEO technical audit depends on the size, complexity and condition of the website. Smaller sites can usually be audited more quickly, while larger sites with thousands of URLs often need more time for crawl analysis, Core Web Vitals assessment and schema review across key page types. What you should receive is a written audit with prioritised recommendations, not just a raw data export that still needs interpretation.

How often should you run an SEO technical audit?

A full SEO technical audit should be completed at least once per year, with monthly health checks in between. You should also run an immediate audit after any significant website redesign, platform migration or major Google algorithm update. Sudden unexplained drops in organic traffic are another trigger - technical changes often go unnoticed until rankings move, and an audit is the fastest way to identify the cause.

What is the difference between a technical SEO audit and a full SEO audit?

A technical SEO audit focuses specifically on the infrastructure layer of your website: crawlability, indexation, page speed, schema markup and site architecture. A full SEO audit covers technical health alongside content quality, on-page optimisation, keyword positioning and backlink profile. The technical audit is the foundation of any serious SEO engagement because infrastructure issues must be resolved before content or link building investments can reach their full potential.

Does a technical SEO audit cover AI search visibility?

A modern technical audit should include AI visibility checks because AI-driven search products can only use content they can access and interpret accurately. Focus the audit on crawlability, rendering, accessibility, content structure and markup consistency without overstating any single factor as a direct citation trigger.

What are the most damaging technical SEO issues?

The most damaging issues are those that prevent pages from being crawled or indexed at all: incorrect robots.txt directives, noindex tags on pages that should rank, pages blocked by authentication or JavaScript rendering problems and severe Core Web Vitals failures on key commercial pages. After these, broken or chained redirects, missing schema on high-value pages and duplicate content that confuses indexing signals are the issues most consistently tied to ranking suppression.

Can I run an SEO technical audit myself?

Yes, with the right tools and technical knowledge. Google Search Console, Screaming Frog and the Chrome UX Report are all accessible to anyone with site access. The challenge is not data collection - it is interpretation and prioritisation. Understanding which issues matter for your specific site, which can be safely ignored and how to translate technical findings into revenue-based development priorities typically requires experience across many sites and audits. Many businesses find that a professional audit returns significantly more value than the time cost of a DIY process.

Fix the Foundation, Unlock the Growth

Strong SEO performance starts with technical clarity. When crawl paths, schema and site performance are working as they should, every content and link-building investment has a better chance to deliver. Run the audit, prioritise fixes by impact and treat technical SEO as the foundation for sustainable growth. When you need help turning findings into action, our technical SEO consultant service can help you move from diagnosis to results.

Want Insights Like This Fortnightly?

Our AI SEO strategies and tactics delivered fortnightly, including bonus trade secrets not shared anywhere else. No fluff. Just what's working right now. 5-minute read.